Speaking the Language of Data

By Michael LeVine, @thoughtculture

Imagine you are standing in the middle of a busy street – the kind of street you’d find in Midtown Manhattan on a Tuesday afternoon. Now, imagine that not a single person speaks your language, but everyone is talking. The streets are buzzing, and while you can hear the chatter, you almost never know what it means.

This is a data scientist’s reality. We accumulate terabytes of data every day, but if we can’t speak the language of the data, our data is useless. We could make every measurement pertaining to every aspect of the universe, but unless we know the laws of the universe, all we’ve done is waste a lot of time, energy, and data storage. What are scientists to do when they can’t speak the language of their data? They do what anyone might do if they found themselves lost in a foreign country – learn the language as quickly as possible.

Scientists apply theoretical models, statistical methods, and machine-learning algorithms in an attempt to search for the structure of the data, and separate the signal from the noise. While methods to collect data have made a huge contribution to the big data revolution, a great deal of the progress in data science can be attributed to the development of advanced techniques to analyze the data. At the completion of the Human Genome Project in 2003, genomic research was not concluded – we added a new and important book to our genomic library, but it was only the beginning of a greater effort to learn how to read it. A decade later, in 2013, the ENCyclopedia Of DNA Elements (ENCODE) project claimed that 80% of the genome was functional. However, both their choice of statistical methods and their interpretations of the results have been met with harsh criticism — a clear indication that we are nowhere near consensus when it comes to the language of our own genetics. Until we can make real sense of the genetic lexicon, our massive library of genomic sequences will be of limited use.

It’s also important to remember that even in the best-case scenario — when the scientists are fluent in the language of their data — a direct interaction with each individual data point is not humanly possible due to the sheer size of the dataset. As such, scientists tend to have an impersonal relationship with their data, relying on computers for practicality.For example, while I could, in theory, read every English language book in the New York Public Library, I wouldn’t live long enough to complete the task. To save time, I could use a computer to read the books for me, providing me with the important messages. But first, I’d need to develop this computer so that it could read the words, comprehend their meaning and context, and separate the important information from the unimportant.

When one is faced with an ever growing body of knowledge, finding the information relevant to your question and turning it into an answer can become difficult. IBM’s computer system, affectionately known as Watson, is designed to solve this type of problem — it will take a question, query a database such as the Internet for relevant information, and then prepare an answer from its findings. But make no mistake: Watson is not a search engine. Rather, the purpose of this system is to build an understanding of human language in order to determine exactly what is being asked, and then formulate an exact answer from the massive amount of information available to it.

In 2011, Watson beat human Jeopardy! champions Ken Jennings and Brad Rutter, nearly doubling their combined total. However, while it was able to win Jeopardy, it’s memory had to be cleared after it downloaded Urban Dictionary and came to the erroneous conclusion that “bullsh*t” was the appropriate response to many requests. Watson is nowhere near perfect, and IBM is constantly working on improvement and new applications tailored to its capabilities. IBM has recently teamed up with several hospitals to use Watson to enquiry the massive database of medical literature, and this application will undoubtedly revolutionize the processes of diagnosis and treatment.

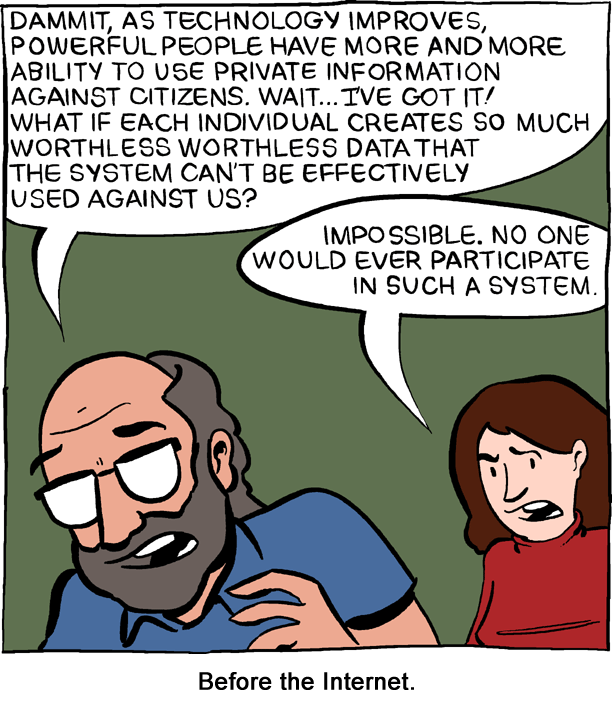

In reality, science isn’t just about accumulating data –- it’s also about understanding it. We can now observe and record nearly every aspect of life across the entire planet, but our newfound ability to collect data doesn’t make us all knowing, or even all seeing. I’m certainly bothered that the NSA has a good portion of my phone and Internet history stored on a server somewhere, but this is due to my own beliefs about privacy, not a fear that Bond-type super-villains are watching my every movement as they diabolically pet a cat in a room full of screens. I’m confident that no human (other than myself) is reading my daily emails, and even if a computer is making an attempt, there is far too much useless chatter and a mediocre understanding of the English language. I personally don’t think that I’ll be hired for an intelligence operation in Barcelona anytime soon, but if the NSA is reading this, put in a good word for me at the CIA. You’ll find my resume in my Sent folder.

Image Source: Zach Weiner, Saturday Morning Breakfast Cereal

1 thought on “Speaking the Language of Data”